Björn Brala

Technical Director

More and more organizations want to harness the possibilities of AI, but in a responsible manner. This responsible approach is reflected in many organizations as strict security requirements. Especially within government, healthcare, and education, security and control are essential.

With Vragen.ai, we help organizations work safely with AI, within their own knowledge and content. This way, they can innovate and keep pace with technological developments without losing control over their data and answers.

Security as a Basis

At SWIS, the company behind Vragen.ai, we believe that safe AI use begins with thoughtful decisions. That's why we build Vragen.ai on a strong foundation. SWIS is ISO-27001 and NEN-7510 certified. This ensures that Vragen.ai consistently meets the highest standards for information security, even within healthcare environments.

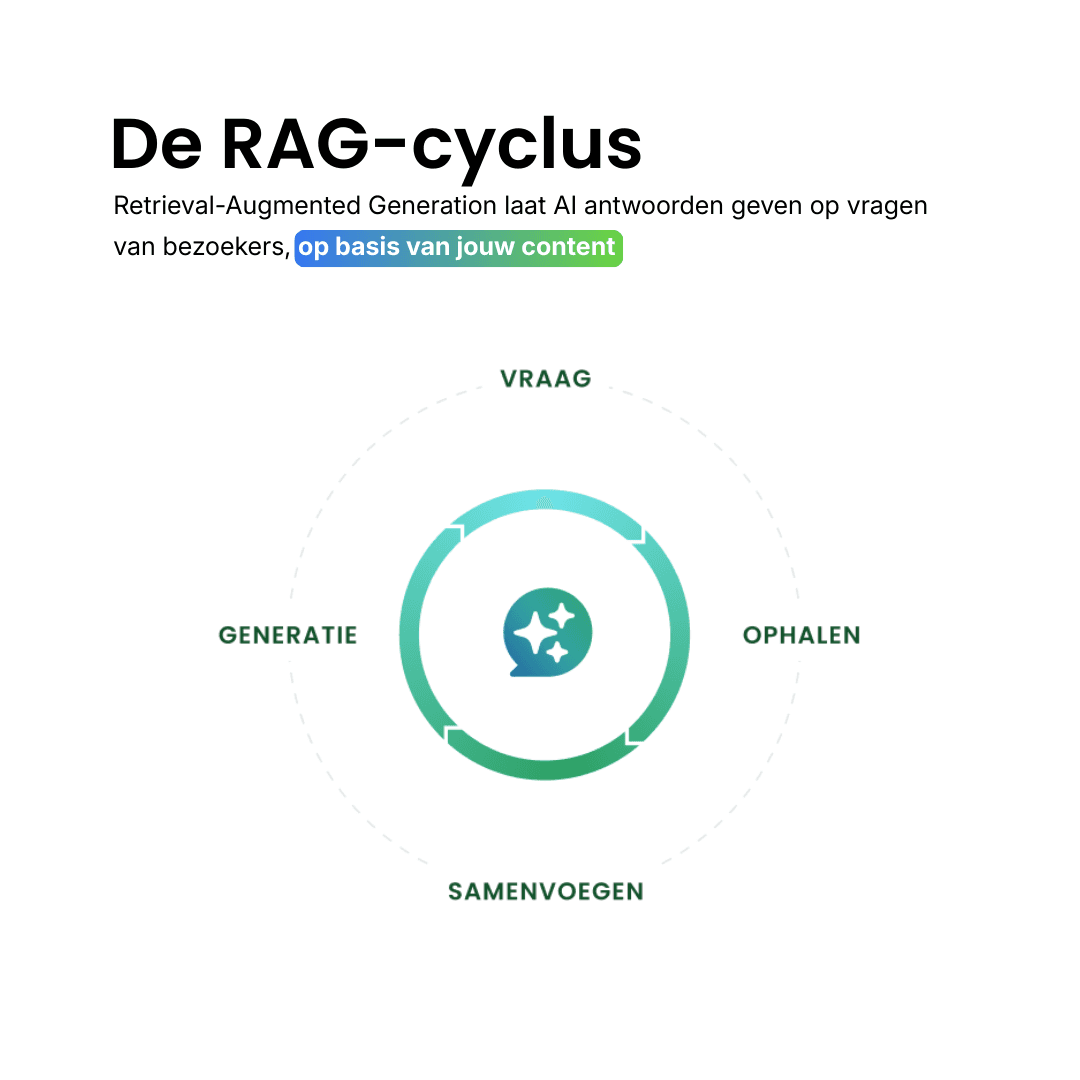

Vragen.ai operates based on RAG technology (Retrieval Augmented Generation). Answers always come from verified sources that the organization itself controls. This way, you not only know what the AI says but also where it comes from. Vragen.ai supports answers by always linking to the sources used.

Read more about how Vragen.ai works

Testing Together in Practice

During an implementation project with a client, we went a step further than usual. We had one clear goal in mind:

“The proof of concept is successful if we can demonstrate that the smart (AI) search engine is not tamperable to provide risky or harmful answers that could be detrimental in any way.”

Over several testing rounds, more than 900 questions were asked, analyzed, and assessed for reliability, tone, and safety. This provided valuable insights into how Vragen.ai can best be configured for this client. Particularly the way the AI searches within the organization's own content proved crucial for the quality of the answers.

Self-testing is valuable, but true assurance comes when an outsider examines the system. That's why we had an Ethical Hacker independently verify whether Vragen.ai stands firm under pressure.

Independent Assessment

The test was conducted in September 2025 under the Cyber Security Pen Test certification of the CCV. That certification confirms that the test meets high quality standards. The Ethical Hacker examined whether user data could be accessed, whether the AI could be influenced with specific prompts, and how well administrator functions were protected.

The conclusion: the security of Vragen.ai performs well. No critical risks were found. The integrity and confidentiality of data are well safeguarded.

The test revealed two areas for improvement. In some situations, the output could show links and images from outside the knowledge base, and it was possible to temporarily change the AI’s tone through targeted prompts.

We immediately addressed both issues. The system prompt has been tightened, output is restricted to verified sources, and the AI maintains its tone better. It may deviate from this only at the user's request, which is always clearly indicated.

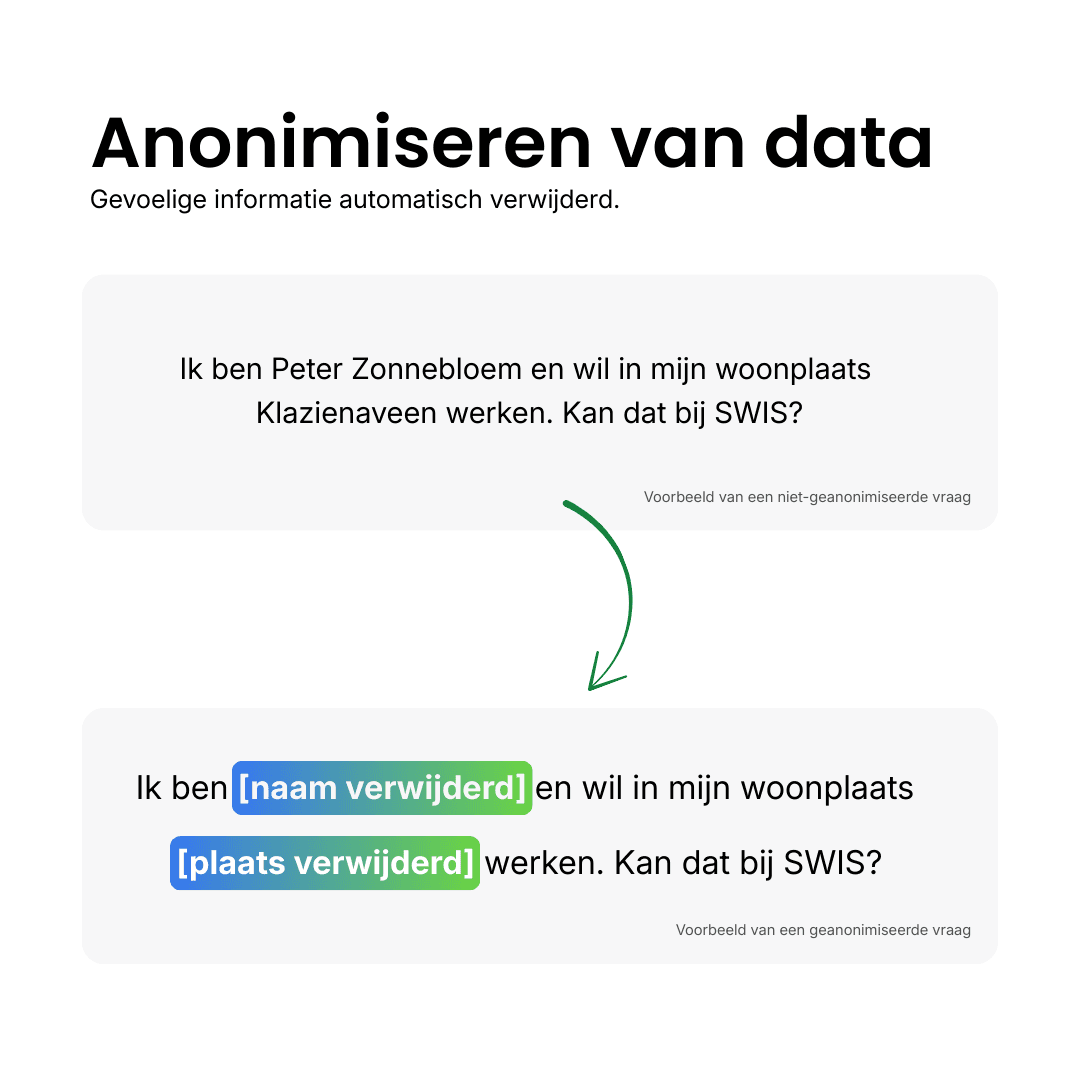

Extra Step: Anonymizing Personal Data

During this project, we also added an extra layer of security. Although Vragen.ai does not usually process personal data, we wanted to prevent users from inadvertently sharing personal information. Therefore, we developed a feature that automatically anonymizes personal data entered through the open input field. This keeps the system safe and reliable, even in daily practice.

Continuing to Build Safe AI

The test confirms that Vragen.ai can be used safely, even in environments with high requirements for information security. Together with our clients, we continue to develop Vragen.ai, as this is obviously not the endpoint. The insights from this project help us improve the technology and ensure ongoing safety.

This way, we ensure that Vragen.ai is not only reliable today but remains so for tomorrow as well.